Same With AI: When Your Task's Priority Is Not What You Expect It To Be

PART 1 -- The Prompting Approach

SAME WITH AI

Gabor

3/2/20267 min read

This is the first post in a new series where we revisit previously discussed engineering problems — this time with AI as part of the toolbox.

In each post, we’ll walk through our process: which models we tried, how we prompted them, what worked, what didn’t, and what we would do differently today.

The goal is simple: to learn from real cases so we can use AI more effectively — and just as importantly, recognize when not to use it. We’re sharing these experiences in the hope that they’ll help you refine your own approach as well.

The issue

Recently, we shared an interesting case on this blog about how tricky and challenging it can be to find the root cause of strange behavior in an embedded system:

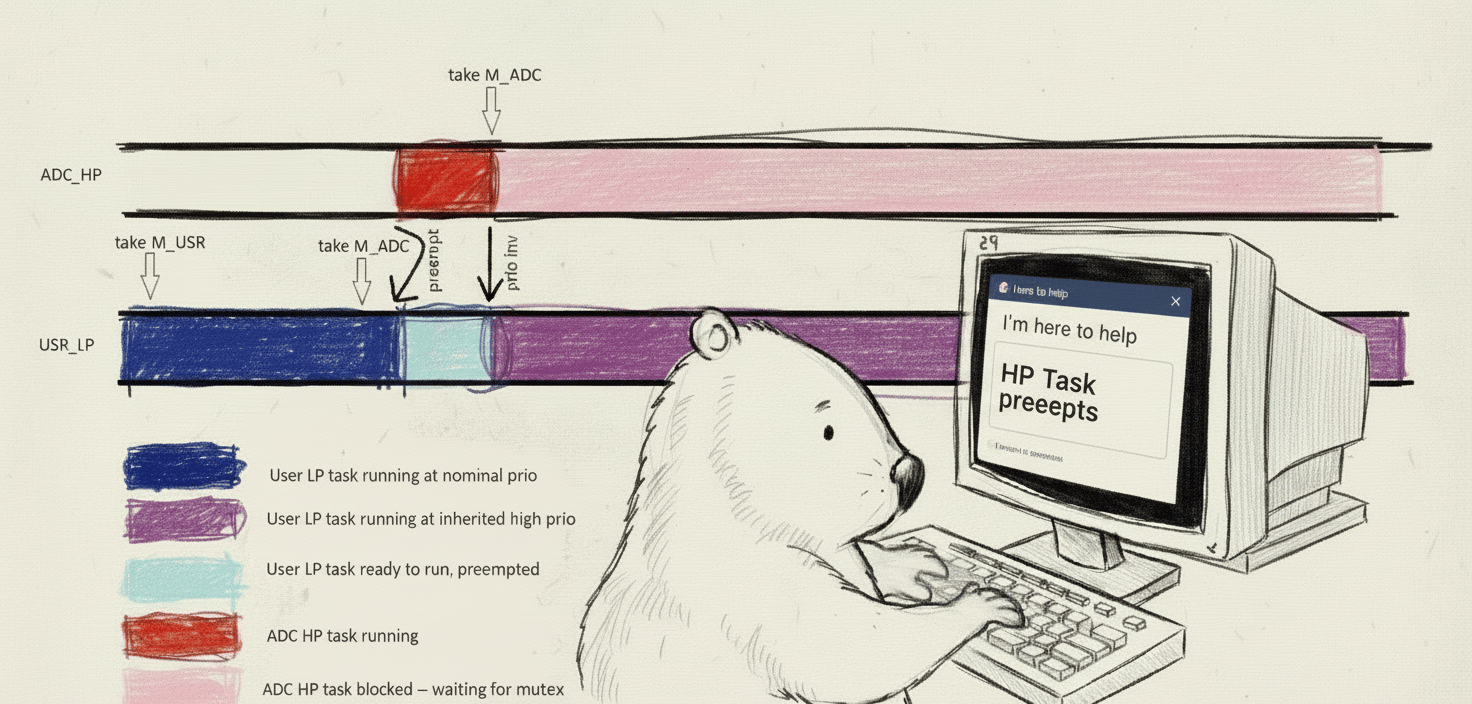

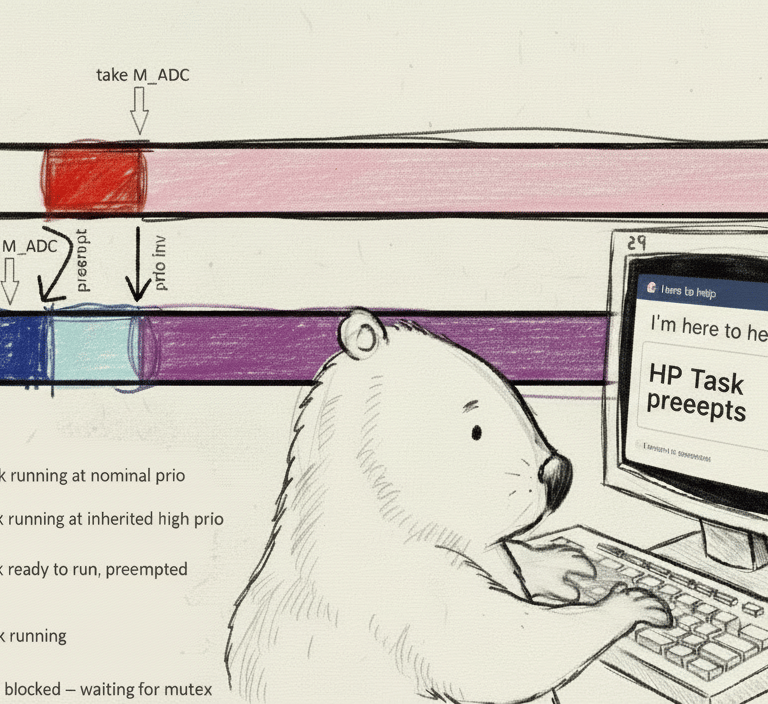

When Your Task's Priority Is Not What You Expect It To Be

This issue was originally handled around 2020, when AI was not yet part of our everyday lives as it is today. We became curious about how AI might have helped with the investigation if we had started the whole process with its support.

Simplified Scenario

To keep things simple, we rewrote the original case into the most minimal form we could imagine — one that still captures the same circumstances from an RTOS perspective:

A timer with an ISR firing every x milliseconds

A high-priority task that is woken up by an event sent from the timer ISR

A mutex that

Is locked by the high-priority task at some point

Is also used internally by the timer API functions

A low-priority task that:

Locks its own mutex in every iteration

Calls a timer API function (which locks and releases the aforementioned timer mutex)

Enters a waiting loop while still holding its own mutex — a loop that may run for a long time

Releases its own mutex only before blocking to wait for the next wake-up event and starting the next iteration

The Models We Used

We evaluated the following AI models:

Claude Sonnet 4.6

GPT 5.2

Composer 1.5 (this choice may seem unusual, but it was driven purely by curiosity)

Below is what we discovered.

The Prompting Approach

The most straightforward way to begin would be to simply describe the case to an AI model and ask for its opinion. That is exactly what we tried first.

Below is the input we provided to the models.

1. Embedded system with FreeRTOS.

2. added a timer that fires every x milliseconds, then sends an event from its own ISR to a high-prio task. As the task was waiting for this event, it now can run.

3. The high-prio task locks a timer related mutex (hp_mutex), modifies some shared structure, then releases hp_mutex and starts waiting for the event again.

4. My low-prio user task locks its own mutex (lp_mutex) then calls a timer related API function, then enters a waiting loop that can possibly take long, then releases lp_mutex and starts waiting for an event.

5. the timer API function locks hp_mutex, accesses the timer related structure, then releases hp_mutex and returns.

--> Result: sometimes my high-prio timer task doesn't run until my low-prio user task leaves the waiting loop. Interrupts keep firing and sending events to the high-prio task. Why stuck?

Originally, we planned to present the outputs of the various models separately. However, their analyses were so similar that it quickly became clear there was little value in detailing them one by one.

That said, here is how AI — essentially all of the tested models — helped us approach this problem.

Recognizing Priority Inversion

As expected, the models had no difficulty identifying that the issue was related to priority inheritance, and consequently, priority inversion.

This was a crucial first step. Correctly recognizing the presence of a priority inversion scenario is fundamental when analyzing complex RTOS behavior, especially when mutexes and ISRs interact across different priority levels.

Below are selected excerpts from the responses:

Composer 1.5

"Priority inheritance will raise the low‑priority task’s priority"

GPT-5.2

"The HP task does run immediately, but it blocks on hp_mutex held by LP (priority inheritance)"

Claude Sonnet 4.6

"This is a classic priority inversion problem, compounded by..."

Guesses About the Root Cause

Although the models correctly identified priority inversion as a central theme, their deeper analysis led in several different directions.

Some of these suggestions were valid and worth checking. Others were technically plausible but clearly misaligned with the actual simplicity of the system.

The main categories of guesses were the following:

(1) High-Level / Application Design Issues

Several responses assumed the problem might originate from architectural or system-level design flaws:

Buffer pool exhaustion

This one was particularly interesting. The tone of the answer suggested the model imagined a full communication stack with dynamic buffers, rather than a relatively simple timer service. While buffer exhaustion can absolutely cause strange behavior, it was not relevant in this case.Queue saturation

Some responses suggested that the high-priority task might be enqueueing timer data faster than the low-priority task could consume it. Again, a valid concern in many systems — but not applicable here.Deadlock / circular dependency

A reasonable suspicion whenever multiple mutexes are involved, but not the actual issue in this scenario.Stack overflow or memory corruption

Always good to keep in mind in embedded systems, but again, not the culprit here.Low-priority task waiting while inside a critical section

This was closer to the mark conceptually, but still not the root cause.

(2) FreeRTOS-Related Misuse or Configuration Issues

The models also explored potential RTOS-specific pitfalls:

configMAX_SYSCALL_INTERRUPT_PRIORITY misconfiguration

This was a very legitimate suggestion. Misconfiguring this parameter can absolutely lead to subtle and hard-to-diagnose behavior.portYIELD_FROM_ISR misuse

Another completely valid hypothesis. Incorrect use (or omission) of portYIELD_FROM_ISR() can prevent proper context switching from an ISR.Below is the exact citation from GPT-5.2:

Furthermore, the models also correctly identified the critical moment in the sequence: the low-priority task holds hp_mutex — meaning it is inside the timer API call — and at that exact point the high-priority timer task preempts it.

This observation was accurate and important. That specific timing window is indeed where the problematic interaction begins.

However, beyond this point the analysis started to drift. The models explored several directions that, while technically reasonable and potentially helpful in other contexts, were not actually close to the real root cause.

The actual issue — once again — was the failure to take into account FreeRTOS’s simplified priority inheritance mechanism, and the subtle consequences that follow from it.

The ISR wakes the HP task, but you don’t request a switch-from-ISR

When you “send an event from ISR” (queue/semaphore/event group/task notify), FreeRTOS will often set a flag like xHigherPriorityTaskWoken. If you don’t end the ISR with the yield macro, the scheduler won’t immediately switch to the just-unblocked higher-priority task. Then the HP task won’t run until the next tick or until the currently running task blocks/yields (which, in your case, is “LP enters the waiting loop”).

Correct pattern (generic):

xQueueSendFromISR / xSemaphoreGiveFromISR / xEventGroupSetBitsFromISR / vTaskNotifyGiveFromISR …

check xHigherPriorityTaskWoken

call the port macro (portYIELD_FROM_ISR(xHigherPriorityTaskWoken) or portEND_SWITCHING_ISR(...) depending on port)

If you miss that final yield, the behavior is exactly: “sometimes it runs later, when LP blocks”.

A legitimate comment — and an important one.

Misuse or misconfiguration of portYIELD_FROM_ISR() is a well-known source of subtle scheduling issues. In some FreeRTOS ports it appears more frequently than in others, depending on how the port layer handles interrupt priority masking and context switching.

It is also worth noting that we never mentioned the MCU in our prompt. The models were not given any hardware-specific context.

For completeness: the target platform was an STM32F407, built around an ARM Cortex-M4 core.

That detail matters, because on Cortex-M devices the interaction between:

interrupt priorities

configMAX_SYSCALL_INTERRUPT_PRIORITY

PendSV triggering

and portYIELD_FROM_ISR()

follows a very specific and well-documented model.

Despite not being told any of this, the AI still correctly raised port–relevant (and particularly Cortex-M relevant) concerns. That, in itself, was an interesting observation.

However, once again, none of these were the actual root cause.

(3) The “Are You Sure?” Tier of Suggestions

Some of the responses also ventured into what we might call the “let’s double-check the obvious” category:

Is configUSE_PREEMPTION actually set to a non-zero value?

Are you absolutely sure the low-priority task doesn’t hold hp_mutex when this happens?

Are you sure interrupts are configured correctly?

These are not unreasonable questions. In fact, they are often necessary. Many real-world bugs turn out to be configuration mistakes or incorrect assumptions.

But at this stage, they felt more like sanity checks than genuine progress toward the root cause.

Conclusion

To be fair, reading through most of the answers was actually somewhat reassuring. The models clearly understood the general circumstances and correctly identified the class of problem we were dealing with. From a conceptual standpoint, the system dynamics were well interpreted.

At no point did I feel that the issue stemmed from an unclear description or missing context in the prompt.

And yet — the real solution, surprisingly, never surfaced.

The behavior in question is neither undocumented nor hidden in obscure forum discussions. The relevant detail (FreeRTOS’s simplified priority inheritance mechanism) is explicitly and publicly described in the official FreeRTOS documentation — specifically in the Mutexes chapter.

Given that this information is readily available in the official documentation, it was somewhat unexpected that none of the models converged on that precise explanation.

In the end, the exercise was still valuable. It demonstrated that AI is very capable of:

Recognizing well-known concurrency patterns

Identifying common RTOS misuses

Suggesting valid configuration checks

But it also showed a limitation:

When the root cause depends on subtle, implementation-specific details — even if those details are publicly documented — the models may remain at the level of generic reasoning unless guided very precisely.

And in embedded systems, as we all know, the devil is often in exactly those details.

Iterating Further

At this point, we did what anyone trying to solve the issue solely with AI assistance would probably do:

We "followed" the suggestions.

We "verified" the configuration.

Then we went back to the model and responded: Yes, checked. Seems OK.

After a few iterations, however, the models appeared to run out of new angles. The answers began to converge toward variations of the same idea:

Something must still be wrong with one of the previously suggested areas.

In other words, the reasoning loop had stalled. The models were circling around plausible causes — but none of them were the actual one.

Soon, we will revisit this case — but with a different approach.

Instead of relying purely on descriptive prompting, we plan to recreate a minimal environment that reliably reproduces the issue. With that in place, we will involve coding-oriented models and let them analyze the actual implementation rather than just a textual description of the behavior.

It will be interesting to see whether providing executable context — instead of conceptual explanation alone — leads to a different outcome.

Another point to consider is assessing how strongly these results depend on the models’ limitations in accessing up-to-date resources, such as the latest FreeRTOS documentation.

Stay tuned.

Engineering & Support

Expert assistance for embedded projects.

Contact

© 2026. All rights reserved.

||